The Amazon CloudFront CDN Shaved Off Up To 50% of Our Recorder Client Loading Time

When user numbers start to grow, one can expect that they will start accessing your service from different geographical regions. Relying on servers located in just one particular region (Europe in our case) will noticeably impact users who access your service from other regions of the globe.

In our pursuit to deliver a fast reliable video recording client, we started investing more of our time in upgrading the servers infrastructure to ensure low latency and high availability.

The first step was to spread the work of delivering the Pipe client files to two servers behind a load balancer. With this setup we could sleep at night knowing that the video recording client would be delivered if one of the web server would suffer a malfunction or connection problem. Although it ensured high availability, it did not improve load times for our users located in regions other than Europe.

CDN to the Rescue

This is where a CDN (cloud delivery network) will help. A CDN speeds up the loading times of static and dynamic web content such as .html, .css, .php, image files, etc by distributing the files through a worldwide network of data centers, called edge locations.

There are 2 ways in which your users’ requests are routed to the nearest CDN edge location:

- using DNS: any request to a particular resource is dynamically routed by the CDN’s name servers to the edge location that can best serve the user’s request (this is typically the nearest edge location in terms of latency). For a long time this routing relied on the DNS resolver’s IP/location not on the actual computer IP/location.

- Anycast: with anycast CDNs the IP address returned by the nameservers for a particular subdomain (like cdn.addpipe.com) is not tied to one IP but to several. When requesting a resource from an Ancycast CDN you will automatically get it from the nearest edge location that’s associated with the IP.

Amazon CloudFront

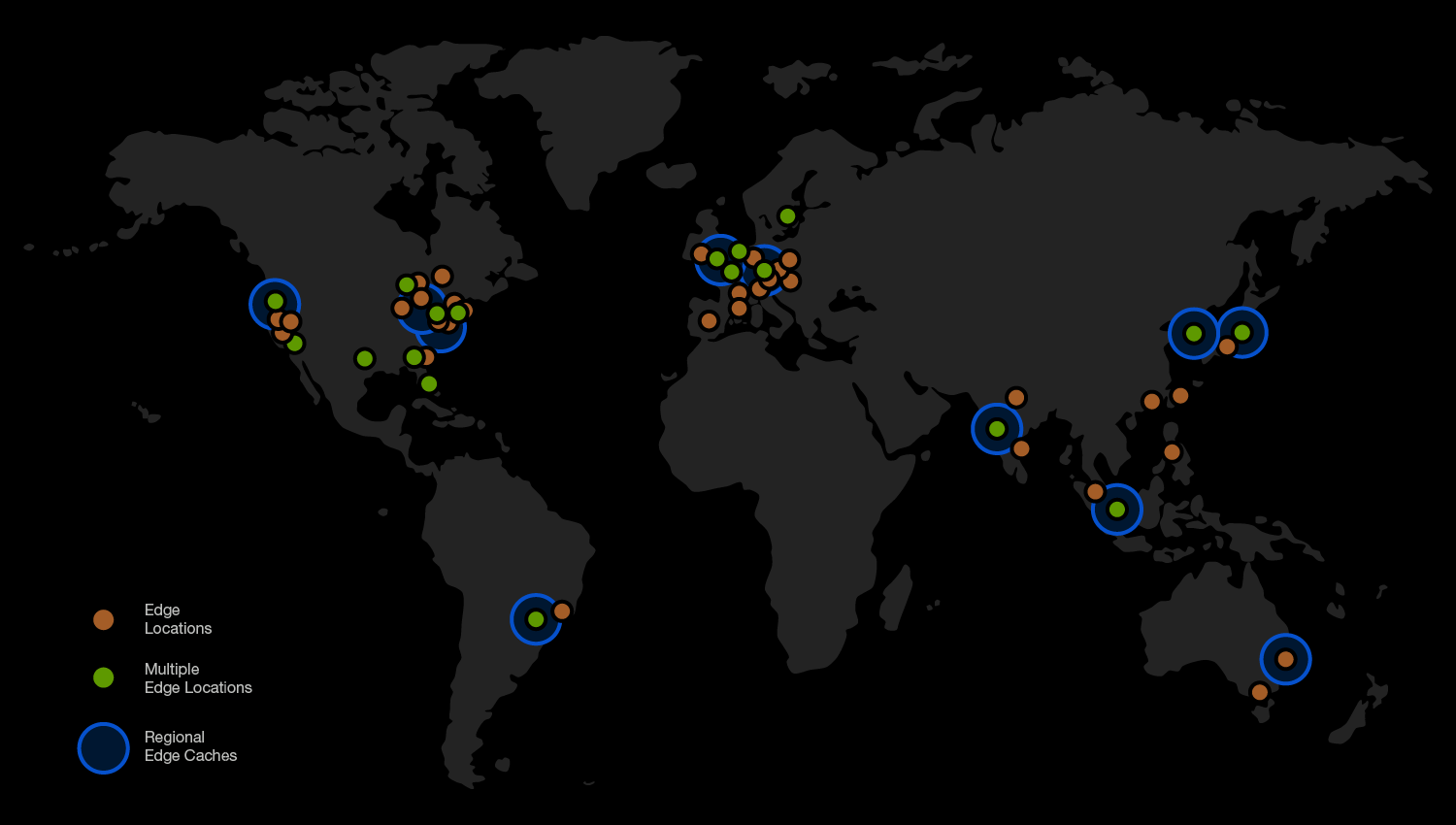

For Pipe’s CDN we went with Amazon’s CDN service, named CloudFront. It currently uses a global network of 96 Edge locations and 11 Regional Edge Caches in 55 cities across 24 countries. You can see them all here.

CloudFront uses DNS to route users to the closest edges. At the basic level the routing works as follows:

- The browser makes a DNS request for the IP of cdn.addpipe.com

- If the DNS server which handles the request does not have the IP it asks the root DNS servers

- If the root servers don’t know the IP either they reply with the name servers for cdn.addpipe.com

- The DNS server now asks the name servers for the IP of cdn.addpipe.com

- The name servers will now reply with the IP address for an edge server which is geographically closest to the DNS server (or device) that made the initial request.

- The DNS server passes the received IP address to the browser and caches the result.

CDN Setup

For our origin server (the server where the original files reside) we’ve chosen to use our new load balanced servers. From here, CloudFront edge servers pull the files when needed.

Cloudfront provides powerful tools for setting up a CDN:

- Cloudfront can handle both HTTP and HTTPS requests and can be setup with custom SSL certificates. Pipe uses both types since it needs to deliver the video recording clients over HTTP or HTTPS.

- Cloudfront can also allow a most of HTTP methods like: GET, HEAD, OPTIONS, PUT, POST, PATCH, DELETE. We are mostly using GET requests, but we also have a few OPTIONS and POST requests.

- We’ve also found query string forwarding and caching useful in case of GET requests, because some of our files rely on query strings when making a request to them. Cloudfront will cache the returned response on the edge server.

- Cloudfront can also forward the HTTP headers of your choice to the origin. We are forwarding

Accept-Languageheader because we are using it to detect the language preferences set in the browser settings. TheOriginheader we use for CORS. - You can also choose a minimum, maximum and default TTL (time to live). This is useful to setup how long the files are cached in the edge locations before CloudFront forwards another request to the origin to determine whether the object(file) has been updated. Be careful when you set this up because Cloudfront charges for each request to the origin server.

- CloudFront will also automatically compress content for web requests that include Accept-Encoding: gzip in the request header. Smaller file sizes are always good for improving total load times.

Any of these options and more can be configured for particular file extensions or for individual files. More exactly different setups for different file types. For instance, we’ve made a profile (CloudFront names them behaviors) just for php files, in order for the php files to run on the origin server and dynamically return new values for each new request.

What We’ve Tested

For testing the load times of our client files we’ve setup a simple HTML page that just has the Pipe recorder embed code in it.

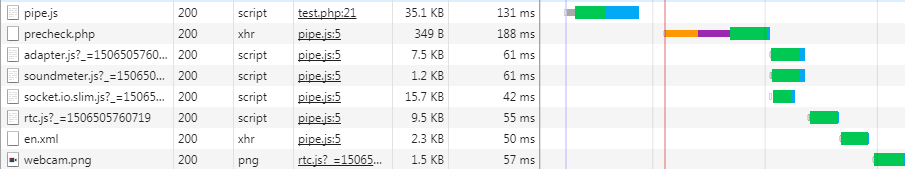

The second generation HTML 5 Pipe video recorder consists of several JS, PHP and XML files plus some images in PNG format. They are loaded in the following order:

pipe.jsprecheck.phpadapter.js,soundmeter.jsandsocket.io.slim.jsare loaded at the same timertc.js- XML language file

- A single image which is first displayed in the initial screen, the rest are loaded as needed.

All files are loaded from the same domain with 2 exceptions:

precheck.phpis loaded from a different different domain that points to a different server (it is dynamic in nature)socket.io.slim.jsis served by it’s own CDN (Cloudflare)

We have a total of 8 files that are being loaded, after 3 DNS lookups, having a total size on disk of 200,84 KB and 78 KB after being compressed when delivered to the browser.

You can see here how it looks in the browser network tab:

The Tools

For testing we’ve used https://www.webpagetest.org – an open source project primarily developed and supported by Google – which provides a system for testing the performance of web pages from multiple locations around the world: the browser runs within a virtual machine and can be configured and scripted with a variety of connection and browser-oriented settings.

The tech being used behind the curtain has at its base the Navigation Timing API which is now supported across many of the modern desktop and mobile browsers.

The real benefit of Navigation Timing is that it exposes a lot of previously inaccessible data, such as DNS and TCP connect times, with high precision (microsecond timestamps), via a standardized performance.timing object in each browser. Hence, the data gathering process is very simple: load the page, grab the timing object from the user’s browser, and beacon it back to the analytics servers! By capturing this data, we can observe real-world performance of our applications as seen by real users, on real hardware, and across a wide variety of different networks.

We’ve run 3 tests before and after using a CDN, targeting the median fully loaded times in the following regions:

- New York, USA

- Amsterdam, NL (where our origin servers are already hosted)

- Sydney, Australia

- Brazil

- Mumbai, Asia

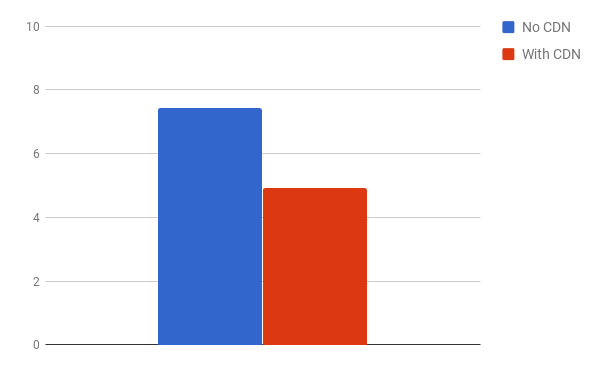

We’ve noticed reductions in total load times across the board between 30% and 50%.

Here are the load time comparisons between no CDN and with CDN for all of the tested regions, the load times are expressed in seconds:

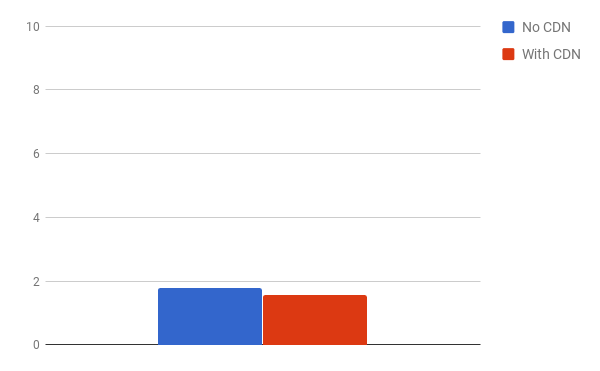

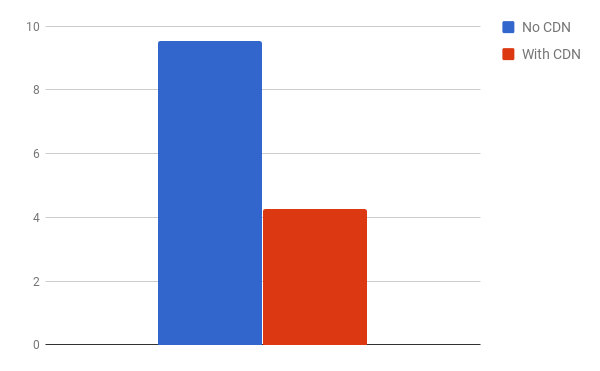

Amsterdam, NL

Geographically our origin server is closest to Amsterdam so the difference here is not so big, as expected, just 0.2 seconds difference in total load times

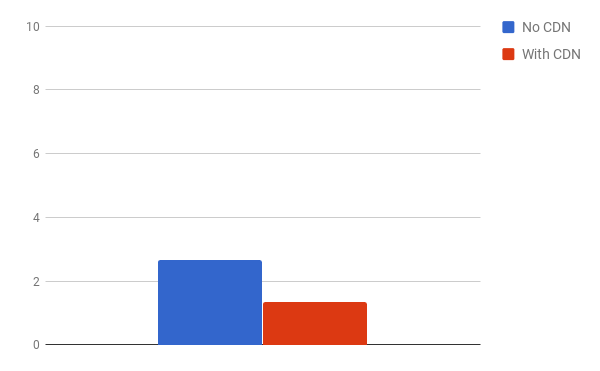

New York, USA

Here we are seeing a difference in load times of almost 1.5 seconds which is quite noticeable.

Sydney, Australia

A noticeable difference can be seen here, where the load times are almost cut in half dropping from under 10 seconds to around just over 4 seconds only.

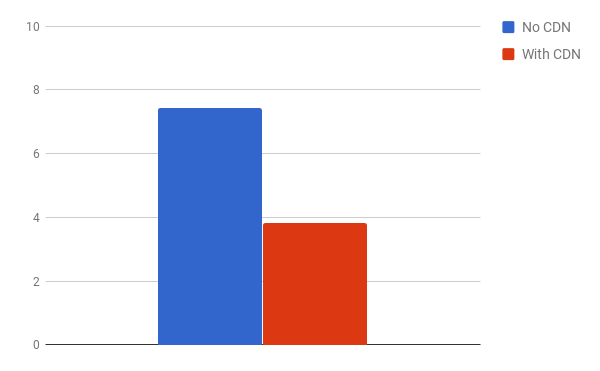

Brazil

Here we can also see a drop of half of the loading time

Mumbai, Asia

A reduction of 1/3 in the loading times can be seen when loading files through the CDN from Mumbai

Conclusion

When you have clients from all over the world, having a CDN is a must for improving user experience.

As it can be seen in the results above, the further away you are geographically from the server of origin, the higher the load times are.

Having a CDN to distribute the files to edge servers that are closest to the user, dramatically improves page load times.

Lowering the number of DNS lookups and HTTP requests also helps but more on that in a later post.